Most ecommerce brands waste 40-60% of their advertising budget on underperforming creatives that should have been killed days ago, while simultaneously under-investing in winning creatives that deserve aggressive scaling. The difference between mediocre marketing efficiency and exceptional performance isn't better creative ideas—it's systematic creative analysis that identifies losers fast enough to prevent budget waste and recognizes winners early enough to capture maximum opportunity before fatigue sets in.

Learning how to analyze ad creative performance transforms advertising from expensive experimentation into predictable revenue generation. The best DTC brands don't rely on intuition, platform recommendations, or gut feeling to make creative decisions. They implement data-driven frameworks that objectively evaluate every creative against clear performance thresholds, killing underperformers within 48-72 hours while scaling winners aggressively before competitors discover the same angles.

Why Most Brands Fail at Creative Performance Analysis

Before diving into effective frameworks, understanding why creative analysis fails at most companies reveals what to avoid when building your own systems.

The Vanity Metric Trap: Optimizing for Engagement Instead of Profit

The most common creative analysis failure is optimizing for metrics that feel good but don't drive business results. High engagement rates, impressive click-through rates, and viral social sharing all create dopamine hits for marketing teams—but none directly predict profitability.

A creative generating 8% CTR but attracting bargain hunters who never repurchase destroys long-term economics despite appearing successful in initial analysis. Conversely, a creative with 2% CTR that attracts high-intent customers with 3x average lifetime value deserves scaling despite seeming underwhelming on surface metrics.

Effective creative analysis prioritizes profit-driving metrics: customer acquisition cost relative to lifetime value, first-purchase contribution margin, repeat purchase rates, and ultimately, incremental revenue generated. Engagement metrics serve as leading indicators but never as primary decision criteria.

The Statistical Significance Paralysis

Many analytically-minded marketers fall into the opposite trap: waiting for perfect statistical significance before making any creative decisions. They run creatives for two to three weeks gathering data, only to discover the "winner" has already fatigued by the time they decide to scale it.

In fast-moving advertising environments where creative fatigue strikes within five to seven days, waiting for 95% statistical confidence means missing the scaling window entirely. The best creative analysts accept lower statistical thresholds (80-85% confidence) compensated by higher testing volume—they'd rather make ten decisions at 85% confidence than one decision at 95% confidence because creative testing velocity compounds advantages.

This doesn't mean ignoring statistics entirely. It means understanding the trade-off between statistical rigor and operational speed, calibrating decision thresholds to your specific business context and creative fatigue timeline.

The Sunk Cost Fallacy in Creative Production

Marketing teams often resist killing creatives that required significant production investment. That video shoot cost $5,000 and involved the founder—surely we should run it longer to "get our money's worth" even though data shows it underperforming.

This sunk cost thinking destroys marketing efficiency. The $5,000 is already spent whether you run the creative for three days or three months. Continuing to allocate budget to underperforming creatives doesn't recover past investment—it compounds losses by wasting future budget.

The Single-Metric Optimization Mistake

Analyzing creative performance through any single metric inevitably creates blind spots. Optimizing purely for CAC misses lifetime value differences. Focusing exclusively on ROAS ignores contribution margin variations across products. Prioritizing conversion rate overlooks volume potential.

The best creative analysts use multi-dimensional frameworks evaluating creatives across five to seven metrics simultaneously, weighting each appropriately for business context. This holistic approach prevents optimizing one metric while destroying overall profitability.

Essential Metrics for Creative Performance Analysis

Effective creative analysis requires tracking the right metrics with appropriate context and benchmarks. Here are the critical measurements that separate signal from noise.

Primary Performance Metrics: The Decision Drivers

These metrics directly inform kill-or-scale decisions and should be visible in real-time dashboards:

Customer Acquisition Cost (CAC): The total advertising cost divided by new customers acquired. This metric matters more than almost any other for creative analysis because it directly determines profitability when compared to customer lifetime value. Track CAC at the creative level, not just campaign or ad set level, to identify exactly which creatives acquire customers efficiently.

Return on Ad Spend (ROAS): Revenue generated divided by advertising spend. While ROAS provides quick directional signals, it's incomplete without CAC and LTV context. A creative with 3.5x ROAS might look worse than one with 4.2x ROAS, but if the former attracts customers with 2x higher LTV, it's actually superior long-term.

Cost Per Click (CPC): The amount paid for each click. CPC serves as an early warning indicator—rising CPC often signals creative fatigue before CAC increases become visible. Track CPC trends over the creative lifecycle to catch degrading performance early.

Click-Through Rate (CTR): The percentage of impressions that generate clicks. Like CPC, CTR functions primarily as a leading indicator. Declining CTR suggests audience fatigue with the creative concept, while consistently high CTR indicates strong resonance (though not necessarily profitable resonance without conversion context).

Conversion Rate (CVR): The percentage of clicks that result in purchases. CVR reveals creative quality beyond just attention-grabbing ability. Creatives with high CTR but low CVR attract clicks without purchase intent, while those with lower CTR but high CVR might be targeting more efficiently.

Cost Per Acquisition (CPA) by Awareness Stage: Track acquisition costs separately for cold, warm, and hot audiences. The same creative might perform excellently for retargeting (hot audiences) while failing completely for prospecting (cold audiences), requiring stage-specific analysis rather than blended metrics.

Secondary Performance Metrics: The Context Providers

These metrics provide essential context for primary metric interpretation:

Frequency: How many times the average user has seen the creative. Rising frequency with declining CTR and rising CPC indicates creative fatigue requiring replacement. Optimal frequency varies by platform and audience size but generally staying below 2.5-3.0 prevents excessive fatigue.

Impression Share: What percentage of available impressions your creative captures. Low impression share might indicate insufficient budget allocation for a winning creative, while 100% impression share suggests the creative has saturated its audience and needs either creative refresh or audience expansion.

Add-to-Cart Rate: The percentage of sessions that add products to cart. This metric separates creatives that drive curiosity (clicks) from those that generate purchase intent (cart additions). Creatives with high clicks but low ATC rates may be misleading on value proposition or attracting non-buyer traffic.

Video Completion Rate (for video creatives): What percentage of users watch the video to completion or specific milestone percentages (25%, 50%, 75%). Low completion rates suggest either creative quality issues (boring, unclear, too long) or targeting problems (showing to disinterested audiences).

Cost Per Thousand Impressions (CPM): The cost to reach 1,000 users. CPM trends reveal platform competition levels and creative quality signals from ad platforms. Improving CPM over time suggests platforms reward your creative with better placement costs.

Lifetime Value Metrics: The Profit Validators

Short-term metrics drive tactical decisions, but LTV metrics determine whether those decisions build sustainable businesses:

Customer Lifetime Value (LTV) by Creative Cohort: Track the actual lifetime value of customers acquired through each creative, measured over 30, 60, and 90+ day windows. This reveals which creatives attract one-time buyers versus repeat customers, fundamentally shifting which creatives deserve scaling.

Repeat Purchase Rate by Creative: What percentage of customers acquired through each creative make second purchases within defined timeframes. High repeat rates indicate creative messaging aligned with product experience, while low rates suggest misaligned expectations or quality issues.

Average Order Value (AOV) by Creative: Initial purchase size varies significantly by creative messaging. Creatives emphasizing premium positioning might have higher CAC but 2x higher AOV, making them more profitable than discount-focused alternatives with better surface-level ROAS.

Time to Second Purchase: How quickly customers acquired through each creative return for repeat purchases. Faster repurchase cycles indicate stronger product-market fit and messaging alignment, creating higher lifetime value even if absolute repeat rates are similar.

Contribution Margin by Creative: Different creatives might drive purchases of different products with varying margins. Track which creatives drive high-margin versus low-margin product sales to optimize for profit, not just revenue.

The Creative Performance Analysis Framework: Kill-or-Scale Decision Process

Effective creative analysis follows a systematic framework that evaluates every creative through consistent criteria, removing emotion and bias from decisions.

Phase 1: The Learning Window (Days 0-3)

The first 48-72 hours after launching a new creative constitute the learning window, where premature judgments destroy potential while excessive patience wastes budget.

Minimum spend threshold: Allocate enough budget to reach statistical relevance. For most DTC brands, this means $300-500 minimum spend per creative for cold audiences, $100-200 for warm audiences. Insufficient spend generates noise, not signal.

Metric observation without action: During the learning window, monitor metrics but resist killing creatives unless performance is catastrophically bad (CAC over 3x target). Ad platforms require time to optimize delivery, and initial results often differ significantly from stabilized performance.

Early warning signals to monitor: While avoiding premature kills, watch for early signals predicting future performance. CTR below 0.8% on Meta or 1.5% on TikTok rarely improves. Conversion rates below 0.5% typically indicate fundamental creative or targeting misalignment.

Pacing analysis: Is the creative spending its allocated budget? Underspending suggests the platform can't find relevant audiences (potential targeting issues) or the creative loses auctions to competitors (quality score problems). Overspending indicates strong platform signals but requires close CAC monitoring.

Phase 2: The Evaluation Window (Days 3-7)

Once creatives pass the learning window with sufficient data, formal evaluation determines kill-or-scale-or-iterate decisions.

Benchmark comparison methodology: Compare each creative's performance against your established benchmarks across all primary metrics. Benchmarks should reflect your specific business economics, not industry averages or platform recommendations.

CAC threshold evaluation: Is the creative's CAC below your maximum allowable CAC (calculated as LTV divided by your required LTV:CAC ratio, typically 3:1 minimum)? Creatives exceeding CAC thresholds get killed unless other extraordinary metrics justify continued testing.

ROAS threshold evaluation: Does ROAS exceed your minimum profitable threshold? For most DTC brands, this is 2.5-4.0x first-purchase ROAS depending on repeat purchase economics and contribution margins.

Relative performance ranking: How does this creative perform versus other active creatives in the same campaign, ad set, or awareness stage? Creatives consistently in the bottom 25% of performance get killed even if they technically meet absolute benchmarks, because they're using budget that could scale top performers.

Trend analysis: Are metrics improving, stable, or declining? A creative with borderline performance but improving trends (declining CAC, rising ROAS) deserves continued testing, while one with acceptable current metrics but degrading trends likely faces imminent fatigue.

Phase 3: The Scale-or-Kill Decision (Day 7+)

By day seven, creatives should clearly fall into winner, loser, or iteration-candidate categories.

Winners: Aggressive scaling criteria

- CAC at least 20% below maximum allowable

- ROAS at least 25% above minimum threshold

- Stable or improving trend over past 72 hours

- Volume potential (not already at 100% impression share)

Winners receive immediate budget increases of 25-50% daily until CAC rises above threshold or impression share maxes out. Don't scale timidly—winning creatives fatigue within two to three weeks, requiring aggressive scaling during their peak performance window.

Losers: Fast kill criteria

- CAC exceeds maximum allowable by 20%+ after day 3

- ROAS below minimum threshold with no improvement trend

- Bottom quartile performance versus other active creatives

- Declining metrics (rising CAC, falling ROAS) over 48+ hours

Losers get killed immediately without sentimentality. Every dollar spent on losing creatives is a dollar not spent scaling winners or testing new candidates. Fast kills prevent sunk cost fallacy and maintain testing velocity.

Iteration candidates: The middle ground

- Performance close to thresholds (within 10% either direction)

- Strong engagement metrics (CTR, video completion) but weak conversion

- High conversion rate but poor reach/volume

- Specific element (hook, CTA, offer) likely causing underperformance

Iteration candidates deserve creative variations testing specific hypotheses: new hooks, different CTAs, adjusted messaging, alternative formats. Launch two to three variations within 48 hours rather than continuing to run marginal performers unchanged.

Phase 4: The Fatigue Monitoring (Ongoing)

Even winning creatives eventually fatigue, requiring constant monitoring and proactive replacement.

Frequency threshold monitoring: When frequency exceeds 2.5-3.0 for cold audiences or 4.0-5.0 for warm/hot audiences, creative fatigue risk rises exponentially. Begin preparing replacement creatives before fatigue impacts performance.

Metric degradation detection: Watch for the fatigue cascade—frequency rising, CTR declining 15-20% from peak, CPC increasing proportionally, CAC following upward. Catch this pattern early and rotate to fresh creatives before CAC destroys profitability.

Diminishing returns on scaling: If increasing budget by 50% only increases conversions by 20%, the creative has hit volume limits. Rather than forcing more spend into saturated audiences, rotate to new creatives targeting fresh users.

Scheduled creative rotation: Even creatives still performing at threshold should be rotated every 14-21 days as proactive fatigue prevention. Don't wait for performance to decline—maintain creative freshness before audiences tire of messaging.

Platform-Specific Creative Analysis Nuances

While the core framework applies universally, each advertising platform requires specific analytical adjustments based on platform mechanics and user behavior.

Meta (Facebook/Instagram) Creative Analysis

Meta's algorithm and user behavior create specific analytical requirements:

Faster decision timelines: Creative fatigue strikes faster on Meta (five to seven days) than other platforms, compressing kill-or-scale decisions. Use the 72-hour evaluation window aggressively rather than waiting full weeks.

Frequency as primary fatigue indicator: Meta's frequency metric provides the clearest early warning of creative fatigue. Monitor daily and prepare replacements when frequency approaches 2.5 for cold audiences.

Campaign Budget Optimization (CBO) complications: Under CBO, Meta allocates budget across ad sets dynamically, making creative-level analysis harder. Use Breakdown reporting by "Ad" to see creative-specific performance despite CBO budget pooling.

Placement-specific performance: The same creative might excel on Instagram Feed while failing on Facebook Feed. Analyze performance by placement and consider placement-specific creative variations rather than killing creatives that only underperform in specific locations.

Creative-to-landing page message match: Meta users expect immediate relevance after clicking. Analyze bounce rate and time-on-site alongside conversion rate—high clicks but poor site engagement suggests creative-to-landing-page misalignment requiring messaging adjustment.

TikTok Creative Analysis

TikTok's unique content culture demands different analytical approaches:

Native content performance premium: Analyze "ad-like" versus "native" creative performance separately. TikTok users strongly prefer content feeling organic to the platform, with native-style creatives often achieving 2-3x better CTR and 30-40% lower CPC than obvious ads.

Video completion rate as quality signal: TikTok's algorithm heavily weights video completion. Creatives with high completion rates (50%+ to end, 80%+ to 3-second mark) receive preferential distribution and lower costs. This metric deserves weight equal to CTR in TikTok-specific analysis.

Spark Ads performance differential: Organic posts boosted via Spark Ads typically outperform direct-upload ads by 20-40% on engagement and 15-25% on conversion. When analyzing TikTok creative performance, segment Spark Ads versus standard ads to avoid false equivalencies.

Longer testing windows acceptable: TikTok creative fatigue occurs slower than Meta (seven to ten days versus five to seven), allowing slightly extended evaluation windows before kill-or-scale decisions.

Google Performance Max Creative Analysis

Google's automated campaign type creates analytical challenges requiring workarounds:

Asset group performance over individual creatives: Performance Max doesn't provide creative-level reporting by default. Analyze asset group performance instead, treating each asset group as a "creative concept" combining multiple asset variations.

Conversion lag accounting: Google conversions often lag clicks by several days due to consideration cycles. Extend evaluation windows to seven to ten days minimum and use conversion tracking windows of 30+ days to capture full impact.

Limited creative control necessitates broad testing: Since you can't precisely control which assets Google serves, test dramatically different asset groups (different value propositions, offers, audiences) rather than subtle variations. Subtle differences get lost in Google's mixing.

Search term report insights: For Performance Max campaigns including Search inventory, analyze search term reports to understand what queries trigger your creatives. Unexpected search terms suggest creative messaging misalignment with actual delivery.

YouTube Creative Analysis

YouTube's video format and user intent create unique analytical requirements:

Completion milestones matter more than CTR: YouTube users often watch ads without clicking, then search branded terms or visit directly later. Track video completion at 25%, 50%, 75%, and 100% as engagement quality signals, not just click-through rate.

View-through conversion attribution: YouTube drives significant view-through conversions (users who saw the ad but didn't click, converting later). Enable view-through conversion tracking and include VTC in creative performance analysis to avoid undervaluing awareness-building creatives.

Longer creative lifecycles: YouTube creatives fatigue slower (fourteen to twenty-one days) due to larger audience reach and less frequent repeat exposure. This allows longer evaluation windows and extended scaling runs before fatigue necessitates replacement.

Skippable versus non-skippable dynamics: Analyze these formats separately with different benchmarks. Non-skippable ads should achieve higher completion rates but often have higher CPM; skippable ads need strong hook retention in the first five seconds to prevent excessive skipping.

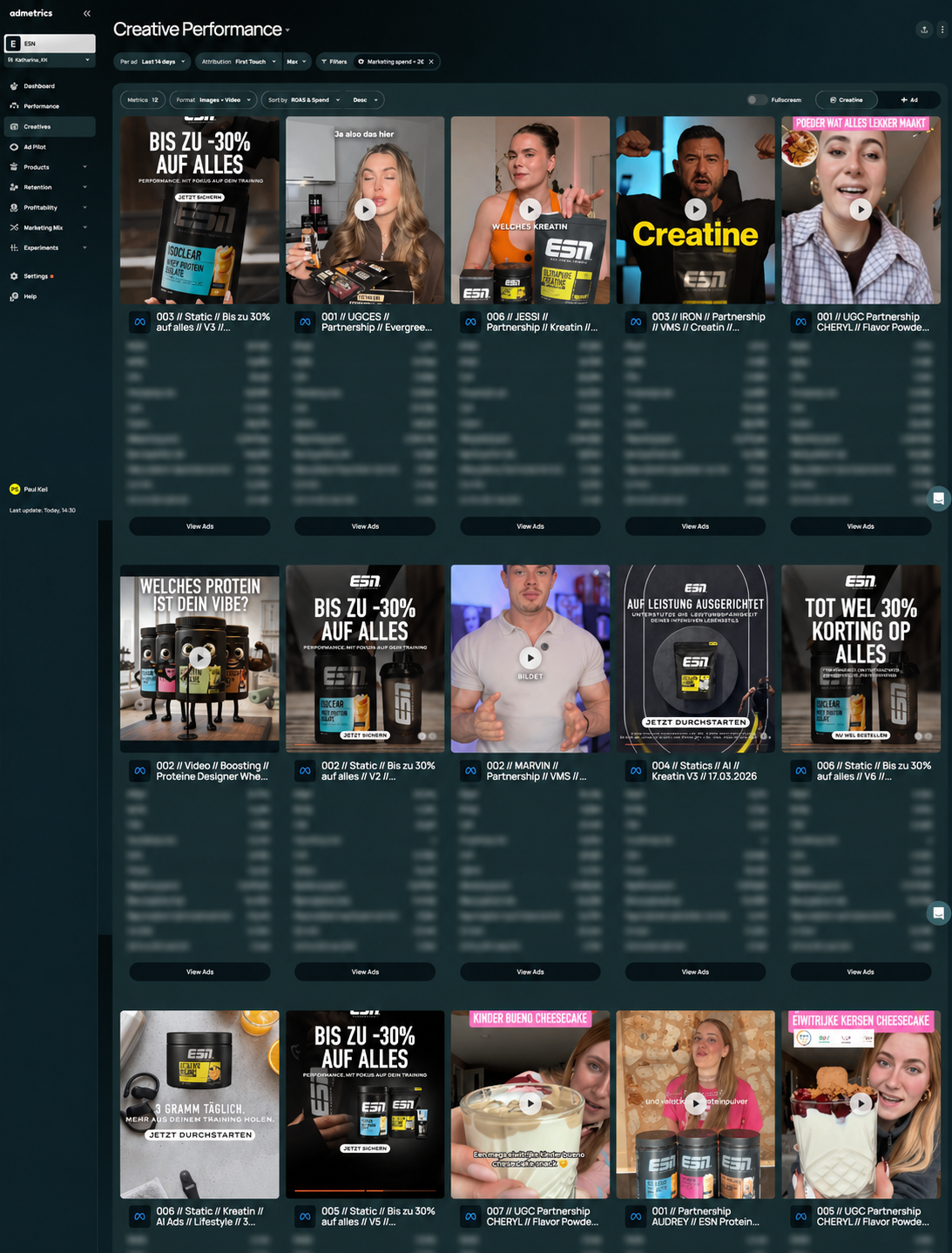

How Admetrics Transforms Creative Performance Analysis

While ad platforms provide basic creative reporting, systematic kill-or-scale decision-making at scale requires dedicated analytics infrastructure that centralizes data, applies consistent frameworks, and surfaces insights proactively.

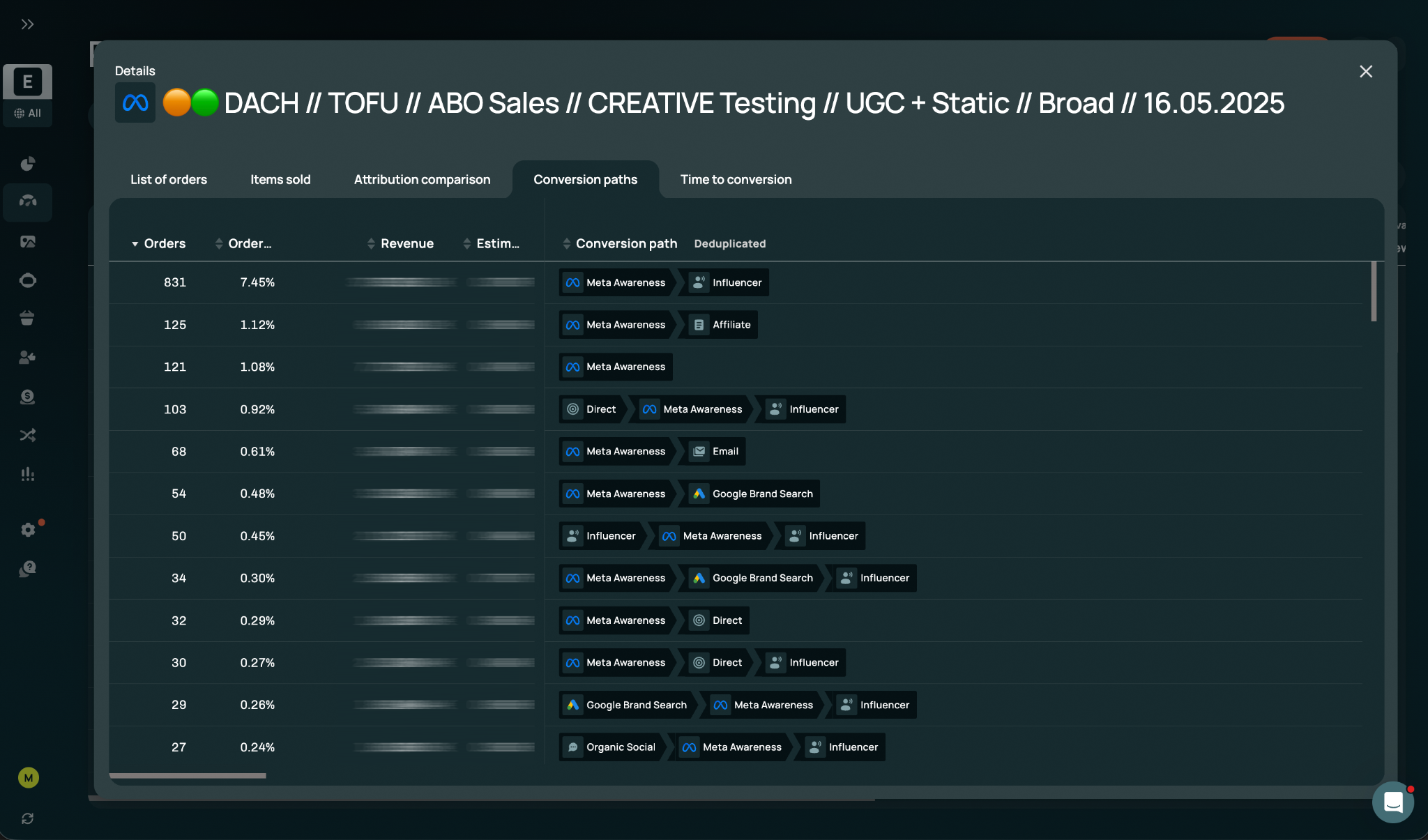

Creative-Level Attribution Across Platforms

Admetrics tracks performance down to individual creative assets across all advertising platforms, connecting specific images, videos, headlines, and copy variations to customer acquisition cost, conversion rate, and critically, lifetime value.

This unified creative intelligence eliminates the platform-hopping required to analyze creative performance when running campaigns across Meta, Google, TikTok, Pinterest, and other channels. One dashboard shows all creatives ranked by any metric, with consistent kill-or-scale criteria applied regardless of originating platform.

For brands running twenty to fifty creatives simultaneously across multiple platforms, this centralization transforms creative analysis from multi-hour weekly exercises into real-time dashboard reviews.

Automated Fatigue Detection and Alerts

Rather than manually monitoring frequency, CTR trends, and CPC movements across dozens of creatives, Admetrics' AI identifies creative fatigue patterns automatically.

The platform analyzes historical fatigue patterns specific to your account—learning that your Meta creatives typically fatigue at 2.3 frequency while TikTok creatives maintain performance to 3.8 frequency—then alerts teams when individual creatives approach their fatigue thresholds.

These proactive alerts enable replacement scheduling before performance degrades, preventing the budget waste that occurs when fatigue hits unexpectedly without fresh creatives ready to deploy.

Multi-Metric Performance Scorecards

Admetrics generates creative performance scorecards evaluating each creative across eight to ten metrics simultaneously, weighted according to your business priorities. Rather than analyzing CAC, then ROAS, then CTR separately, scorecards provide single composite scores instantly revealing winners and losers.

This multi-dimensional approach prevents single-metric optimization blind spots. A creative might have excellent CAC but poor LTV, or strong ROAS but insufficient volume potential—scorecards surface these trade-offs immediately.

LTV-Adjusted Creative Rankings

Perhaps Admetrics' most powerful creative analysis capability is connecting creatives to actual customer lifetime value, not just first-purchase metrics.

The platform tracks customer cohorts by acquisition creative, measuring 30-day, 60-day, 90-day, and longer-term LTV. This reveals which creatives attract one-time bargain hunters versus loyal repeat customers—intelligence impossible to glean from standard ad platform reporting focused exclusively on first-purchase performance.

For subscription businesses or brands with strong repeat purchase economics, LTV-adjusted creative analysis completely transforms kill-or-scale decisions. Creatives with mediocre first-purchase ROAS that attract 2x higher LTV customers deserve aggressive scaling despite appearing unremarkable in standard analysis.

Concept Performance Clustering

Beyond individual creative analysis, Admetrics groups creatives by underlying concept, revealing which core ideas perform best regardless of specific execution variations.

This concept-level intelligence compounds over time. After testing forty social proof creatives, twenty product demonstration creatives, and fifteen founder story creatives, you know definitively which creative archetypes resonate with your audience—data informing all future creative development.

Concept clustering also prevents falsely killing winning ideas due to poor execution. A strong concept tested with weak video production might underperform, but concept clustering reveals that other variations of the same core idea succeeded—suggesting the concept deserves retesting with better execution rather than abandonment.

Creative Production ROI Analysis

Admetrics tracks creative production costs alongside performance metrics, calculating return on creative investment and efficiency-adjusted performance metrics.

A $5,000 video creative achieving 3.5x ROAS might look strong in isolation, but if a $300 UGC creative achieves 3.2x ROAS, the UGC delivers dramatically better creative ROI (return divided by production cost). This analysis ensures you're not just scaling performance winners but also production efficiency winners.

For brands producing fifty to one hundred creatives monthly, understanding which production approaches deliver optimal performance-to-cost ratios informs resource allocation: more UGC, fewer expensive studio shoots, or vice versa depending on your specific creative ROI data.

Building Systematic Creative Analysis Workflows

Technology and metrics matter, but systematic workflows ensure creative analysis actually drives consistent kill-or-scale decisions rather than sporadic reviews when someone remembers to check dashboards.

Daily Creative Performance Review Ritual

High-performing teams review creative performance daily, not weekly, enabling fast kills and aggressive scaling while opportunities exist:

Morning performance snapshot (15 minutes): Review yesterday's creative performance against benchmarks. Identify any creatives that crossed kill thresholds overnight or winners showing breakthrough performance worthy of immediate scaling.

Fatigue monitoring check (10 minutes): Review frequency levels and metric trends for all active creatives, flagging any approaching fatigue thresholds for replacement planning.

Budget reallocation (10 minutes): Shift budget from underperformers to overperformers based on previous day's data. Don't wait for weekly reviews—daily reallocation captures opportunities before they dissipate.

Alert response (as needed): When analytics platforms like Admetrics send fatigue alerts or performance anomaly notifications, respond same-day with creative rotation or budget adjustment.

This daily ritual requires only thirty to forty minutes but prevents the budget waste and missed opportunities inherent in weekly-only review cadences.

Weekly Creative Strategy Session

While daily reviews handle tactical kill-or-scale decisions, weekly strategy sessions analyze patterns and inform future creative development:

Winner pattern analysis (30 minutes): Review the past week's top-performing creatives, identifying common elements—hooks, value propositions, creative archetypes, formats. These patterns inform next week's creative briefs.

Loser pattern analysis (20 minutes): Similarly analyze underperformers to identify what to avoid. Did all founder-focused creatives underperform? Did specific hooks fail across multiple variations? Document these learnings to prevent repeating failures.

Creative velocity calculation (10 minutes): Calculate past week's creative velocity (new creatives deployed per $10,000 spend). Compare to targets and previous weeks, diagnosing any velocity shortfalls.

Pipeline planning (30 minutes): Based on fatigue monitoring and creative velocity needs, plan next week's creative production priorities. Which awareness stages need fresh creatives? Which channels show fatigue? Which archetypes need testing?

Benchmark calibration (10 minutes): Review whether current kill-or-scale thresholds remain appropriate. If overall account performance improved, raise thresholds so only truly exceptional creatives get scaled. If struggling, temporarily relax thresholds to maintain testing volume.

Monthly Creative Performance Review

Monthly reviews provide strategic perspective beyond weekly tactical optimization:

Cohort LTV analysis: Review 30-day, 60-day, and 90-day LTV for customer cohorts acquired through different creatives in previous months. This long-term perspective often reveals that creatives appearing mediocre in week-one analysis actually drove high-value customers.

Creative archetype effectiveness: Calculate overall performance by creative archetype (problem-solution, product demonstration, social proof, etc.) across the month. Shift creative production emphasis toward proven archetypes while reducing investment in consistently underperforming approaches.

Platform-specific creative insights: Analyze which creative types work best on each platform. Maybe carousel ads dominate on Meta while single-image ads win on Pinterest, or UGC crushes on TikTok while professional production excels on YouTube.

Production efficiency analysis: Review creative production costs versus performance, identifying which production approaches (UGC, creator partnerships, in-house, agency) deliver optimal creative ROI.

Testing backlog prioritization: Based on monthly learnings, prioritize next month's creative testing hypotheses. Which concepts deserve variation testing? Which new archetypes are worth exploring? Which winning creatives need refresh variations before fatigue?

Common Creative Analysis Mistakes to Avoid

Even with frameworks and tools, specific analytical mistakes destroy creative performance optimization:

Mistake 1: Insufficient Budget for Statistical Relevance

Running ten creatives with $50 each generates noise, not insights. Each creative needs minimum spend to reach statistical relevance—typically $300-500 for cold audiences depending on your conversion rates and AOV.

Under-budgeting creative tests creates false negatives (killing potential winners due to insufficient data) and false positives (scaling apparent winners that were statistical flukes). Better to test five creatives with $500 each than ten creatives with $250 each.

Mistake 2: Comparing Creatives Across Incomparable Contexts

Comparing a cold prospecting creative's CAC to a hot retargeting creative's CAC creates false conclusions. Different awareness stages, audiences, and campaign objectives demand different benchmarks.

Always segment creative analysis by awareness stage (cold/warm/hot), campaign objective (awareness/consideration/conversion), and audience characteristics. A cold prospecting creative with $75 CAC might be excellent while a retargeting creative at the same CAC is disastrous.

Mistake 3: Ignoring Creative Interaction Effects

Analyzing creatives in isolation misses interaction effects where performance depends on what other creatives are running simultaneously.

Multiple creatives with similar messaging create frequency exhaustion faster than diverse creative portfolios. A new social proof creative might underperform not because it's weak, but because you're already running five other social proof creatives, saturating that archetype.

Track creative diversity alongside individual performance, ensuring balanced archetype representation rather than accidentally oversaturating audiences with repetitive messaging.

Mistake 4: Optimizing for First Touch Instead of Full Journey

Giving all credit to the first ad a customer saw (first-touch attribution) versus the last ad (last-touch) or balanced multi-touch attribution creates dramatically different creative performance conclusions.

Bottom-of-funnel creatives (retargeting) always look amazing in last-touch attribution while top-of-funnel awareness creatives appear worthless. The reverse happens with first-touch attribution. Neither reflects reality.

Use multi-touch attribution models that assign credit across the customer journey, recognizing that awareness creatives, consideration creatives, and conversion creatives all contribute to eventual purchases.

Mistake 5: Letting Creative Fatigue Accumulate Before Replacement

Waiting until creative performance has clearly degraded before replacing creatives maximizes budget waste. The days between fatigue onset and replacement burn money at declining efficiency.

Proactive creative rotation schedules replacement before fatigue impacts performance. Even creatives still meeting thresholds should be rotated every fourteen to twenty-one days to prevent fatigue rather than reacting to it.

Conclusion: Systematic Creative Analysis Drives Sustainable Growth

Learning how to analyze ad creative performance systematically transforms advertising from expensive guessing into predictable revenue generation. The difference between brands that scale profitably and those that burn capital fighting rising CAC isn't access to better creative ideas or larger budgets—it's rigorous analytical frameworks that identify losers fast enough to prevent waste and recognize winners early enough to capture maximum opportunity before fatigue.

The best-performing DTC brands implement daily creative performance reviews using platforms like Admetrics that centralize creative-level data across channels, apply consistent kill-or-scale criteria, detect fatigue patterns automatically, and connect creatives to actual customer lifetime value rather than just first-purchase metrics. These systematic workflows enable the fast decisions and aggressive scaling that compound into sustainable competitive advantages.

For ecommerce brands and DTC leaders serious about marketing efficiency, building systematic creative analysis capabilities represents one of the highest-ROI investments available. The frameworks are proven, the tools are accessible, and the economic impact is immediate: most brands implementing rigorous creative analysis see CAC decrease 20-35% within four to six weeks simply by reallocating budget from unnoticed underperformers to under-invested winners.

Ready to transform creative analysis from occasional spreadsheet exercises into systematic kill-or-scale workflows? Start your free Admetrics trial today and access creative-level attribution across all platforms, automated fatigue detection with proactive alerts and multi-metric performance.

Frequently Asked Questions

How to analyze ad creative performance to identify winners and losers?

To analyze ad creative performance effectively, track primary metrics (CAC, ROAS, CPC, CTR, conversion rate) alongside LTV data, comparing each creative against established benchmarks during a three to seven day evaluation window. Winners show CAC 20%+ below maximum allowable, ROAS 25%+ above minimum threshold, and stable or improving trends. Losers exceed CAC thresholds by 20%+, fall below ROAS minimums, or rank in the bottom quartile versus other active creatives. Use platforms like Admetrics to centralize creative-level data across channels with automated fatigue detection.

What metrics matter most when analyzing creative performance for scaling?

The metrics that matter most when analyzing creative performance for scaling are customer acquisition cost (CAC) relative to lifetime value, return on ad spend (ROAS), and customer LTV by creative cohort. While engagement metrics like CTR and CPC provide early warning signals of creative fatigue, profitability metrics determine which creatives deserve aggressive budget increases. Track CAC trends over the creative lifecycle—winners maintain stable CAC while scaling, whereas creatives showing CAC increases of 15-20% during scaling attempts have hit volume limits requiring creative rotation rather than continued budget increases.

How quickly should I kill underperforming ad creatives?

You should kill underperforming ad creatives within 48-72 hours after they accumulate sufficient data for statistical relevance, typically $300-500 spend for cold audiences or $100-200 for warm/hot audiences. Creatives showing CAC exceeding maximum allowable by 20%+ after this learning window should be killed immediately to prevent budget waste. Every day spent running confirmed losers is money not spent scaling winners or testing new candidates. The exception is iteration candidates showing strong engagement but weak conversion, which deserve two to three creative variations testing specific improvement hypotheses within 48 hours.

What tools help analyze creative performance across multiple platforms?

Tools that help analyze creative performance across multiple platforms include Admetrics (with unified creative-level attribution, fatigue detection, and LTV tracking), Northbeam (creative analytics at premium pricing), and manual tracking combining native platform data in spreadsheets. Admetrics specifically offers creative-centric analytics designed for kill-or-scale decisions, tracking individual creative performance across Meta, Google, TikTok, and other channels with multi-metric scorecards, concept clustering, and production ROI analysis—capabilities missing from generic analytics platforms that focus on campaign or account-level reporting rather than creative-specific intelligence.

How does creative fatigue affect ad performance and when should creatives be replaced?

Creative fatigue affects ad performance through a cascading sequence where frequency increases first, followed by CTR declining 30-50%, CPC rising proportionally, and CAC increasing as higher costs combine with potentially lower conversion rates. This degradation typically occurs within five to seven days on Meta, seven to ten days on TikTok, and ten to fourteen days on Google, though timelines vary by platform and audience size. Creatives should be replaced proactively before performance degradation becomes severe—ideally when frequency approaches 2.5 for cold audiences or when CTR shows 15-20% decline from peak performance, not after CAC has already risen significantly.

What's the difference between killing creatives too fast versus too slow?

Killing creatives too fast (before 48-72 hours or $300-500 minimum spend) creates false negatives where statistical noise causes premature abandonment of potential winners, destroying testing efficiency and wasting production investment. Killing creatives too slow (beyond seven to ten days despite clear underperformance) wastes budget on confirmed losers rather than scaling winners or testing new candidates, directly destroying profitability. The optimal balance accepts 80-85% statistical confidence compensated by higher testing volume—making more decisions faster rather than fewer decisions with perfect certainty, since creative fatigue timelines demand operational speed over statistical perfection.